Google Veo AI 3.1 Update: ‘Ingredients to Video’ Masters Vertical Consistency

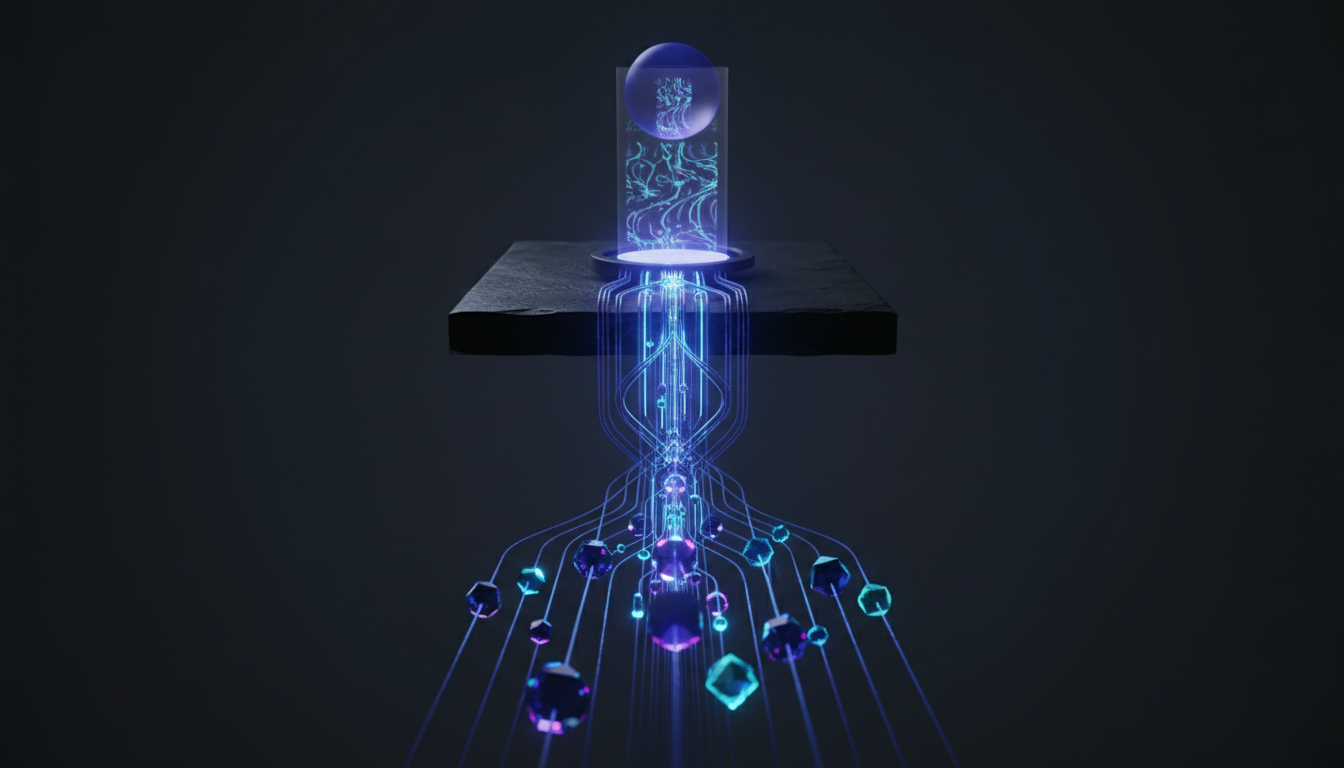

The trajectory of generative video has historically been defined by a singular metric: fidelity. However, as we move past the initial awe of diffusion-based rendering, the industry demands a pivot toward utility and controllability. The release of Google Veo AI 3.1 marks a significant architectural shift in this direction. By moving beyond simple text-to-video stochastic generation, Google is engineering a pipeline that prioritizes granular user control through its novel “Ingredients to Video” mechanism and addressing the specific geometric constraints of the mobile-first web through mastered vertical consistency.

As technical architects analyzing the generative landscape, it is evident that Veo 3.1 is not merely a model retraining but a fundamental optimization of how control signals are injected into the latent diffusion process. This analysis dissects the technical implications of these updates, exploring the spatiotemporal transformers at play and the profound impact on the creator economy’s infrastructure.

The Architecture of Control: Deconstructing ‘Ingredients to Video’

The defining feature of the Google Veo AI 3.1 update is the introduction of “Ingredients to Video.” From a machine learning perspective, this represents a sophisticated evolution in multimodal input processing. Previous iterations of generative video models (such as early Gen-2 or stable diffusion video forks) struggled with concept bleeding—where the style of an input image would overwhelm the semantic instruction of the prompt, or vice versa.

Veo 3.1 appears to solve this through advanced signal disentanglement. The “Ingredients” approach allows users to supply distinct multimodal tokens: visual assets (images), stylistic references, and textual context. The model likely utilizes a multi-encoder setup where visual embeddings are weighted separately from textual embeddings before entering the cross-attention layers of the transformer backbone.

Multimodal Signal Injection and Weighting

In standard diffusion models, the prompt acts as the primary conditioning signal. In Google Veo AI 3.1, the architecture seemingly adopts a more hierarchical approach to conditioning. By treating input images as “ingredients,” the model can freeze specific feature vectors—such as the geometry of a character or the specific texture of a product—while allowing the diffusion process to hallucinate motion and lighting around those fixed points.

This suggests the implementation of mechanisms similar to Adapter layers or ControlNets, but integrated natively into the core 3D U-Net or Transformer architecture. This allows for:

- Entity Permanence: The ability to keep a subject consistent across frames without the model “forgetting” the subject’s structure during occlusion or rotation.

- Style Transfer Isolation: Applying a visual style (e.g., “cyberpunk wireframe”) without altering the geometry of the source ingredients.

Parameter-Efficient Fine-Tuning (PEFT) Implications

The speed and responsiveness of the “Ingredients to Video” feature imply that Google is leveraging highly optimized inference pipelines, possibly utilizing Parameter-Efficient Fine-Tuning (PEFT) techniques like LoRA (Low-Rank Adaptation) on the fly. This allows the massive foundation model to adapt to user-provided ingredients without requiring a full weight update, drastically reducing the computational cost per generation while maintaining high fidelity to the source material.

Conquering the 9:16 Aspect Ratio: Vertical Consistency

One of the most persistent challenges in training Large Vision Models (LVMs) for video is the bias inherent in training datasets. The vast majority of high-quality cinematic footage used to train these models is in 16:9 or 21:9 aspect ratios. Consequently, when earlier models attempted to generate vertical (9:16) video, they often exhibited “cropping artifacts” or centered the action poorly, hallucinating details on the top and bottom that lacked temporal coherence.

Google Veo AI 3.1 specifically targets Vertical Consistency, a critical requirement for the YouTube Shorts and TikTok ecosystems. This is not a simple crop operation; it is a generative capability rooted in spatiotemporal understanding.

Training Data Rebalancing and Cropping Invariance

To achieve this, Google’s engineers likely employed a combination of dataset rebalancing and specific architectural constraints:

Variable Aspect Ratio Training

Unlike models that resize all training data to a square or landscape resolution, Veo 3.1 likely utilizes a “bucketed” training approach where videos of various aspect ratios are grouped. This prevents the model from learning a strong prior that associates “high quality” solely with “landscape orientation.” By treating the aspect ratio as a conditioning token (e.g., passing the target resolution as an embedding into the network), the model learns to organize the composition of the scene specifically for a vertical field of view.

Addressing the “Stretching” Hallucination

A common failure mode in generative video is the stretching of objects when forcing a non-native aspect ratio. Veo 3.1 demonstrates a robust understanding of Euclidean geometry within the latent space. When generating a vertical video, the attention mechanisms focus on vertical congruity. This ensures that a subject walking from the background to the foreground maintains their aspect ratio correctly, rather than becoming elongated to fill the vertical frame—a clear sign of improved 3D spatial awareness within the model’s world model.

Spatiotemporal Coherence in Google Veo AI 3.1

The leap to version 3.1 is not just about inputs and aspect ratios; it represents a tightening of the physics engine implicit in the neural network. Video generation requires maintaining coherence across two axes: spatial (pixels within a frame) and temporal (pixels across time).

Reducing “Dream-Like” Morphing

Early AI video was characterized by a dream-like quality where objects would morph or melt. Google Veo AI 3.1 exhibits significantly higher temporal stability. This suggests improvements in the temporal attention layers of the Transformer backbone. By extending the context window of the attention mechanism across a larger number of frames, the model can “look back” further in time to ensure that an object generated in Frame 1 remains consistent in Frame 60.

Latent Space Dynamics

The “Ingredients” feature also aids in coherence. By anchoring the generation to a provided image, the diffusion process has a ground truth reference to return to. This reduces the variance in the latent trajectory, effectively constraining the model to generate frames that are plausible continuations of the input ingredients rather than diverging into random noise.

Integration into the Creative Stack: The API Economy

For technical architects and developers, the release of Veo 3.1 signals a shift toward API-driven creativity. Google is positioning Veo not just as a standalone tool, but as a generative backend for the broader YouTube and Workspace ecosystem.

Retrieval-Augmented Generation (RAG) for Video

The concept of “Ingredients” creates a pathway for Video RAG. Imagine a workflow where a brand’s asset library is indexed. A creative director could prompt Veo 3.1 with a script, and the system dynamically pulls specific product shots (ingredients) from the database to generate a vertically optimized ad spot. This moves AI video from “fun experiment” to “enterprise production pipeline.”

Strategic Analysis: Veo vs. The Field

While OpenAI’s Sora dazzled with physics simulation and Runway’s Gen-3 Alpha impressed with photorealism, Google Veo AI 3.1 is carving a niche in controllability. In a production environment, predictability is more valuable than raw capability. If a director cannot control the output, the tool is useless for commercial work. By focusing on “Ingredients” and precise aspect ratio control, Google is targeting the professional creator class that requires reliable, repeatable results over stochastic chaos.

Technical Deep Dive FAQ

How does Google Veo AI 3.1 handle occlusion in vertical video?

Veo 3.1 utilizes advanced temporal attention mechanisms that likely encode object permanence. When a subject is occluded (blocked) in a vertical frame, the model’s 3D understanding (learned from vast video datasets) predicts the subject’s trajectory behind the obstruction, ensuring they re-emerge with the correct features and lighting, derived from the initial “ingredients” input.

Does the “Ingredients to Video” feature increase inference latency?

While processing multimodal inputs (images + text) is computationally more intensive than text-only prompts, Google likely employs optimized cross-attention layers. The “ingredient” images are encoded once into latent embeddings and reused across the generation steps, minimizing the added latency. The bottleneck remains the denoising steps of the diffusion process, not the encoding of the input signals.

Is Google Veo AI 3.1 using a Diffusion Transformer (DiT) architecture?

While Google has not open-sourced the weights, the industry trend and Veo’s capabilities strongly suggest a Diffusion Transformer (DiT) architecture or a highly modified Video Vision Transformer (ViViT). These architectures replace the traditional U-Net backbone with transformers, allowing for better scaling and more effective handling of spatiotemporal data tokens.

What makes 9:16 generation in Veo 3.1 superior to cropping landscape video?

Cropping a 16:9 generation to 9:16 results in a massive loss of resolution and often ruins the composition (cutting off heads or key action). Veo 3.1 generates natively in 9:16, meaning every pixel is hallucinated specifically for that canvas. This ensures optimal framing, resolution retention, and composition that adheres to the rule of thirds within a vertical constraint.

This technical analysis was developed by our editorial intelligence unit, leveraging insights from the original briefing found at this primary resource.