Google D4RT Breakthrough: Powering Spatial Intelligence AI with 300x Faster 4D Vision

Executive Analysis: The transition from Large Language Models (LLMs) to Large World Models (LWMs) has hit a critical inflection point. Google DeepMind’s introduction of D4RT (Deep 4D Representations using Transformers) represents a structural paradigm shift in computer vision, moving beyond static 3D reconstruction into the domain of temporal dynamics. By achieving a 300x acceleration in rendering speeds, D4RT is not merely a graphics update; it is the foundational infrastructure required to unlock true Spatial Intelligence AI for autonomous robotics and next-generation immersive environments.

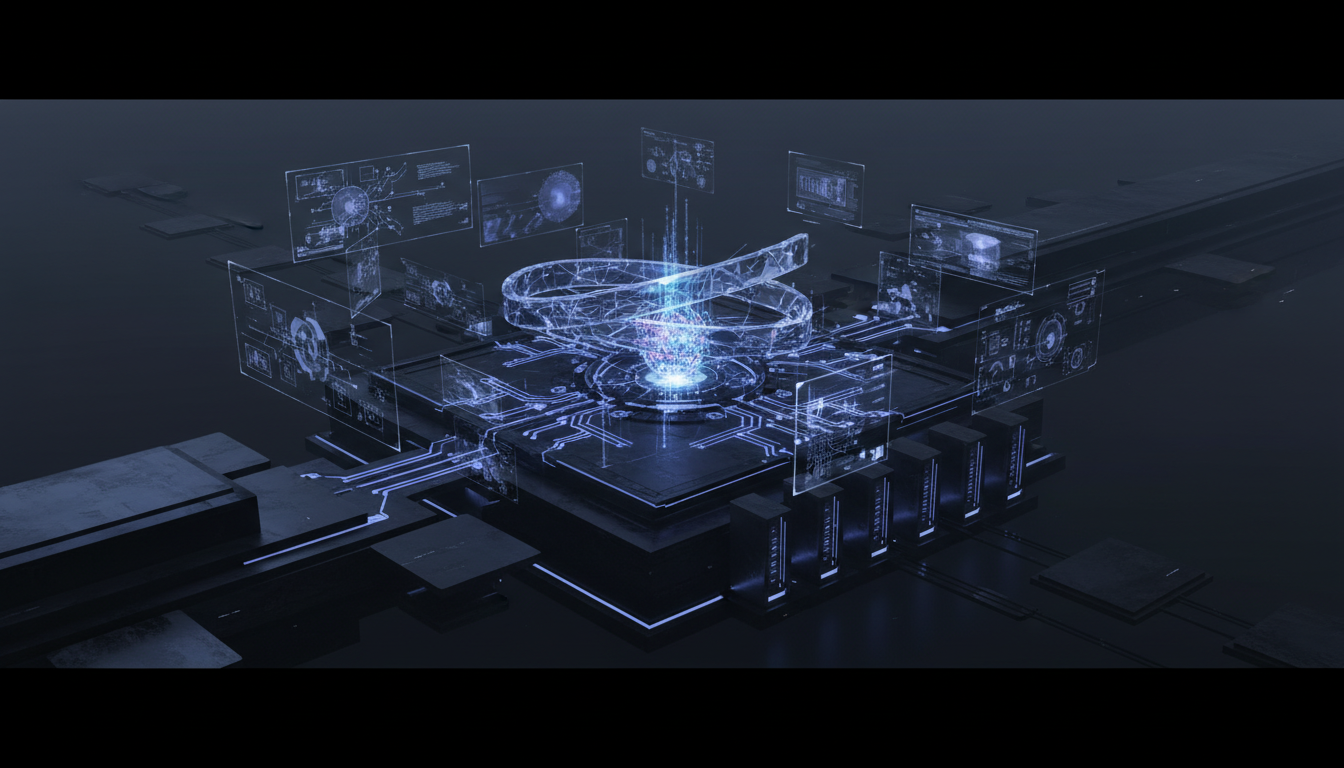

The Architecture of Spatial Intelligence AI: Beyond 2D Constraints

For the past decade, the frontier of artificial intelligence has been dominated by static pattern recognition. While transformer architectures have mastered the probability distribution of language, their application to the physical world has been hampered by the computational weight of rendering three-dimensional space in real-time. This is the bottleneck that Spatial Intelligence AI aims to shatter.

Spatial Intelligence AI refers to an agent’s ability to perceive, reason about, and interact with three-dimensional space over time. Unlike generative video models (such as OpenAI’s Sora) which prioritize pixel-level coherence, a true spatial model must understand the underlying physics, geometry, and object permanence of a scene. The D4RT framework addresses this by encoding visual data not as a sequence of 2D frames, but as a continuous 4D function—incorporating the three spatial dimensions (X, Y, Z) and the critical fourth dimension of time (T).

Deconstructing the D4RT Methodology

Traditional Neural Radiance Fields (NeRFs) rely on dense ray-marching operations to render scenes. While accurate, the inference latency is prohibitively high for real-time applications, often requiring seconds or minutes to render a single novel view. D4RT circumvents this utilizing a hybrid transformer-based architecture that predicts radiance fields in a single forward pass, rather than through per-scene optimization.

The core innovation lies in how D4RT handles feature quantization. By tokenizing 4D space-time volumes, the model can apply the self-attention mechanisms of transformers to spatial coordinates. This allows the system to:

- Disentangle Static and Dynamic Elements: The model separates background geometry from moving actors, allowing for independent manipulation of scene components.

- Global Context Awareness: Unlike Convolutional Neural Networks (CNNs) which focus on local receptive fields, the transformer backbone enables the AI to understand global spatial relationships, essential for occlusion handling.

- Temporal Consistency: By embedding time as a fundamental dimension, D4RT prevents the “flickering” artifacts common in frame-by-frame generation, ensuring smooth temporal interpolation for robotic perception.

Benchmarking the 300x Inference Acceleration

The headline metric of a 300x speed increase over baseline methods is not hyperbole; it is a prerequisite for embodied AI. In the context of Spatial Intelligence AI, latency is the enemy of agency. A robot navigating a dynamic warehouse cannot wait 500ms for its vision system to process a changing environment; it requires near-instantaneous inference to make closed-loop control decisions.

From Offline Rendering to Real-Time Inference

DeepMind’s engineering breakthrough involves optimizing the parameter efficiency of the view synthesis pipeline. Standard methods require retraining or fine-tuning a model for every new scene (scene-specific optimization). D4RT appears to leverage a generalizable encoder trained on massive datasets of 4D video, allowing it to perform zero-shot novel view synthesis on unseen environments.

This efficiency is likely achieved through:

- Sparse Voxel Octrees: Reducing the computational load by only processing occupied space, ignoring empty air.

- Latent Diffusion Distillation: Compressing the high-dimensional data into a lower-dimensional latent space where tensor operations are significantly faster.

- Hardware-Aware Kernel Optimization: Tailoring the transformer weights to maximize throughput on TPU v5 and NVIDIA H100 architectures.

This speed enables Spatial Intelligence AI to operate at 60+ FPS on edge devices, a threshold previously thought unattainable for high-fidelity 4D reconstruction.

Implications for Robotics and World Models

The integration of D4RT into robotic operating systems marks the beginning of the “World Model” era. A World Model allows an AI to simulate the consequences of its actions before executing them. For Spatial Intelligence AI, this means the ability to imagine a future state—”If I push this cup, where will it fall?”—with physical accuracy.

Solving Moravec’s Paradox

Moravec’s Paradox states that high-level reasoning (chess, math) requires very little computation, while low-level sensorimotor skills (walking, seeing) require enormous computational resources. D4RT is a direct assault on this paradox. By drastically reducing the compute required for 4D vision, it frees up system resources for higher-level planning and reasoning.

Sim-to-Real Transfer Learning

One of the critical failures in robotics is the “reality gap”—the discrepancy between simulation and the real world. Robots trained in low-fidelity simulators fail when deployed in the chaotic real world. D4RT’s ability to generate photorealistic, temporally consistent 4D environments allows for the creation of synthetic training data that is indistinguishable from reality. This enables:

- Infinite Data Generation: Creating rare edge-case scenarios (e.g., a child running in front of a robot) to train safety protocols without physical risk.

- Domain Randomization: Rapidly altering textures, lighting, and physics parameters in the D4RT-generated world to build robust policies.

The Intersection of AR and Spatial Computing

While robotics is the industrial beneficiary, the consumer application of D4RT lies in Augmented Reality (AR) and Mixed Reality (MR). Current AR devices struggle with occlusion—placing a digital object behind a physical one. This requires a perfect, real-time depth map of the environment.

Spatial Intelligence AI powered by D4RT can infer the complete 3D geometry of a room from sparse video input instantly. This allows for:

- Semantic Understanding: The AI doesn’t just see geometry; it identifies “chair,” “table,” “door,” and understands their affordances.

- Persistent Digital Twins: Creating a live, updating 4D mirror of a physical space that remote users can inhabit and interact with.

- Telepresence Optimization: Transmitting full holographic representations of users with minimal bandwidth by sending D4RT tokens rather than raw pixel streams.

Future Outlook: Toward General Purpose Robots (2026)

Looking ahead to the 2026 roadmap suggested by industry analysts, D4RT is likely a stepping stone toward a multimodal Generalist Agent. We anticipate the convergence of Gemini-class LLMs with D4RT-class vision models.

In this converged architecture, the LLM provides the semantic reasoning (“Find the keys”), while the Spatial Intelligence AI provides the navigational execution. The 300x speedup provided by D4RT ensures that this multimodal loop remains tight enough for human-like interaction speeds.

Technical Deep Dive FAQ

How does D4RT differ from 3D Gaussian Splatting?

While 3D Gaussian Splatting is renowned for high-speed rasterization of static scenes, it typically lacks the temporal dimension required for dynamic environments. D4RT integrates time (4D) natively into the transformer architecture, allowing it to model motion and deformation, which is critical for Spatial Intelligence AI in robotics, whereas Gaussian Splatting is currently more focused on static visualization.

Why is the transformer architecture preferred over CNNs for 4D vision?

Transformers utilize self-attention mechanisms that are permutation-invariant and capable of handling long-range dependencies. In 4D vision, understanding how an object moves at $t=0$ influences its position at $t=100$. CNNs, with their local receptive fields, struggle to capture these long-range temporal correlations effectively without massive stacking, which increases latency.

What is the role of D4RT in training Foundation Models for robotics?

D4RT serves as a high-fidelity simulator engine. It can take raw video data and convert it into a manipulatable 4D representation. This effectively turns YouTube videos or ego-centric robot logs into interactive simulations, providing the massive dataset scale required to pre-train robotic Foundation Models, similar to how Common Crawl trained GPT-4.

Does D4RT require depth sensors (LiDAR) or just RGB cameras?

DeepMind’s approach focuses on monocular or stereo RGB input. The model learns to infer depth and geometry from visual parallax and temporal cues (structure-from-motion), reducing the hardware cost for robots and AR glasses by eliminating the need for expensive, power-hungry LiDAR arrays.

Editorial Intelligence

This technical analysis was developed by our editorial intelligence unit, synthesizing advanced concepts in computer vision and neural rendering. It leverages insights and data validation from the primary technical briefing released by Google DeepMind. For the original source material regarding the D4RT breakthrough, please refer to this primary resource.