Waymo 6th-Gen Architecture: Deconstructing the Zeekr Platform’s Full Autonomy Rollout

Executive Synthesis: The deployment of Waymo’s 6th-generation hardware on the Geely Zeekr platform represents a fundamental decoupling from retrofitted legacy automotive constraints. This is not merely a vehicle refresh; it is the industry’s first mass-production-ready, purpose-built Layer 4 architecture designed to optimize sensor fusion, reduce inference latency, and achieve unit-economic viability at scale.

The Shift from Retrofit to Native Architecture

For the past decade, the autonomous vehicle (AV) sector has been shackled by the limitations of the “retrofit paradigm.” The Chrysler Pacifica and Jaguar I-PACE iterations were marvels of engineering, but they were ultimately compromises—standard human-driven chassis adapted to host high-performance compute substrates. The arrival of the Zeekr-based robotaxi on U.S. roads marks the transition to a native autonomous topology.

As technical architects, we must analyze this through the lens of Hardware-Software Co-Design. The 6th-generation Waymo Driver is not an addon; it is integrated into the vehicle’s nervous system. This purpose-built form factor allows for optimal sensor placement without the aesthetic or structural constraints of consumer vehicles, significantly improving the Operational Design Domain (ODD).

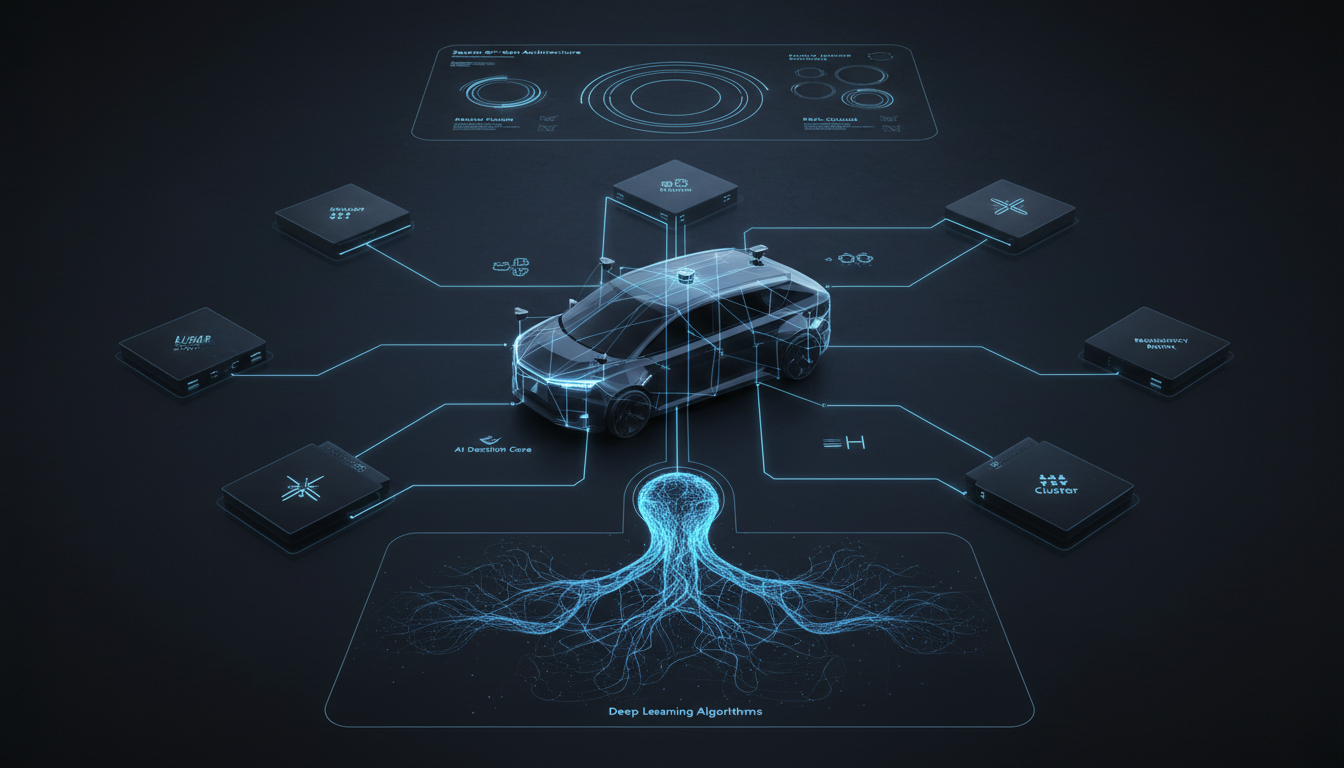

Sensor Fusion and the 6th-Gen Stack

The core efficacy of the new platform lies in its re-engineered sensor suite. Waymo has moved beyond simple redundancy to a sophisticated overlapping sensor topology that minimizes occlusion and maximizes semantic understanding of the environment.

1. High-Fidelity LiDAR Integration

The 6th-generation system utilizes a reduced number of LiDAR units compared to previous iterations, yet achieves higher resolution. By strategically placing four LiDAR units, the system achieves a complete 360-degree point cloud density required for long-range object detection (up to 500 meters) and precise localization. This allows for superior performance in identifying non-standard objects—debris, erratic pedestrians, and open car doors—in complex urban canyons.

2. The Vision System: Semantic Segmentation at the Edge

With 13 cameras integrated into the vehicle’s roofline and periphery, the visual cortex of the Waymo Driver has been optimized for high-dynamic-range (HDR) performance. This is critical for edge case handling, such as navigating sun glare, sudden transitions from tunnels to daylight, and identifying traffic light states at extreme distances. The system leverages convolutional neural networks (CNNs) and, increasingly, Vision Transformers (ViTs) to perform semantic segmentation in real-time, categorizing pixels into drivable space, vegetation, dynamic agents, and static infrastructure.

3. Radar and Audio Multi-Modality

Radar remains the backbone of velocity detection. The 6th-gen imaging radar system provides crucial data on object speed and trajectory, functioning reliably in conditions where optical sensors degrade, such as heavy fog or torrential rain. Furthermore, the inclusion of External Audio Receivers (EARs) creates a multi-modal sensory input that allows the AI to localize emergency sirens with triangulation precision that exceeds human capability.

Compute Efficiency and Thermal Management

One of the most understated engineering challenges in Level 4 autonomy is the compute-to-power ratio. Running heavy inference models—specifically large-scale transformer models for behavioral prediction—generates immense heat and consumes battery life.

The Zeekr platform addresses this with a centralized compute architecture that shares cooling loops with the EV’s battery thermal management system. This integration ensures that the inference engines maintain peak performance without thermal throttling, even during high-load scenarios in extreme ambient temperatures. This efficiency is paramount for maximizing range and uptime, directly impacting the unit economics of a robotaxi fleet.

Redundancy and Fail-Operational Safety

In the context of removing the human driver entirely, Fail-Operational architecture is non-negotiable. The 6th-generation platform features dual-redundant braking and steering systems. Unlike Fail-Safe systems (which bring the vehicle to a stop upon failure), Fail-Operational systems ensure that if a primary actuator or compute node fails, a secondary system can seamlessly take over to complete the maneuver or guide the vehicle to a safe harbor.

- Power Redundancy: Dual independent power rails ensure critical sensors and compute modules never lose power.

- Compute Redundancy: Parallel processing pipelines compare outputs; if a discrepancy exceeds a confidence threshold, the backup system initiates a safe fallback protocol.

- Inertial Navigation Redundancy: In GPS-denied environments, high-precision IMUs (Inertial Measurement Units) work in concert with simultaneous localization and mapping (SLAM) to maintain sub-centimeter accuracy.

Expanding the Operational Design Domain (ODD)

The deployment of these vehicles in cities like San Francisco, Phoenix, and Los Angeles serves as a validation crucible for the 6th-gen hardware’s weather resilience. Waymo has aggressively pushed the ODD to include diverse weather conditions. The sensor cleaning systems—utilizing high-pressure air and fluid jets—have been re-engineered to maintain sensor uptime during mud, salt spray, and bug strikes.

Furthermore, the Behavioral Prediction Models have been fine-tuned on petabytes of real-world driving data. These models use probabilistic reasoning to anticipate the actions of other road users, effectively modeling “social interactions” between the robotaxi and aggressive human drivers, cyclists, and jaywalkers. This moves the system from reactive avoidance to proactive negotiation of right-of-way.

Technical Deep Dive FAQ

How does the 6th-Gen sensor suite differ from the Jaguar I-PACE iteration?

The 6th-gen suite is modular and significantly more cost-effective. It reduces the component count while increasing resolution and range. It is designed for manufacturability, allowing Waymo to scale production without the bottlenecks associated with the bespoke, hand-calibrated setups of the previous generation.

What is the role of Transformer models in the Waymo Driver stack?

Transformers are utilized for their attention mechanisms, which are superior at handling sequential data and establishing relationships between distant elements in a scene. In the context of AVs, this allows the system to better predict the future trajectory of dynamic agents based on their past behavior and the scene context, vastly improving intersection handling.

How does the system handle sensor occlusion?

The system employs Sensor Fusion at the raw data level. If a camera is occluded, the LiDAR and Radar data are weighted more heavily. The 360-degree overlapping fields of view ensure that there are no blind spots, even if a specific sensor is temporarily compromised.

Is the compute hardware custom silicon?

While specific chipset details are proprietary, the industry trend—and Waymo’s trajectory—points toward the use of custom accelerators (TPUs or similar ASICs) optimized for matrix multiplication, essential for running deep neural networks with low latency and high energy efficiency.

Editorial Intelligence Reference: This technical analysis was developed by our editorial intelligence unit, synthesizing architectural data and deployment signals. It leverages insights and factual validation from the original briefing found at this primary resource.