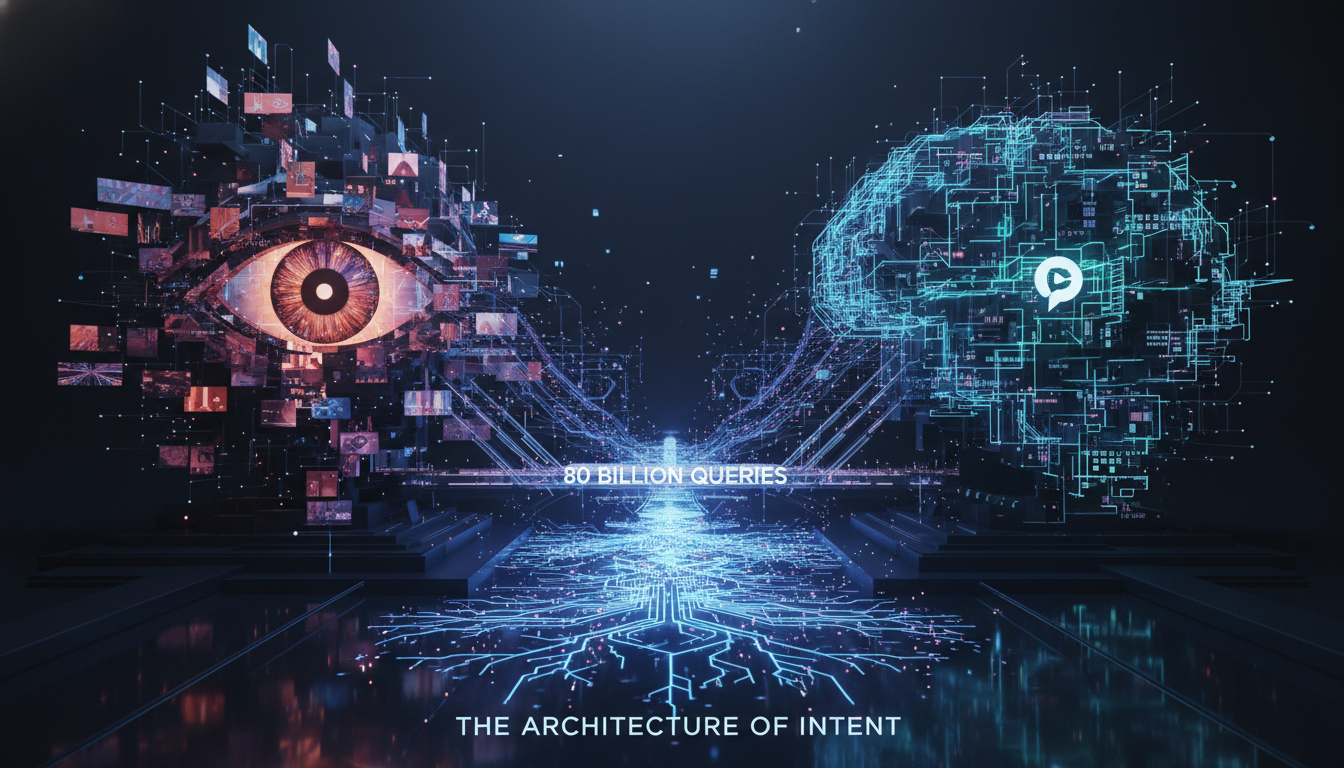

Pinterest’s 80 Billion Query Anomaly: Analyzing Visual Search Volume vs. ChatGPT’s Generative Inference

Executive Synthesis: While market sentiment reacted negatively to Pinterest’s earnings miss, a deeper architectural metric has emerged. The platform reports processing 80 billion searches monthly—surpassing current estimates for ChatGPT. This analysis deconstructs the divergence between visual discovery graphs and Large Language Model (LLM) inference, evaluating the technical sustainability of Pinterest’s specialized vector search in a generative era.

The Metric Paradox: Financial Miss vs. Interaction Density

In the high-stakes arena of algorithmic attention, a curious divergence occurred in the Q1 2026 reporting cycle. Pinterest (NYSE: PINS) faced significant headwinds regarding revenue realization, leading to a stock sell-off. However, buried within the earnings call was a statistic that challenges the current narrative of AI dominance: Pinterest claims to process approximately 80 billion searches per month. For context, this figure eclipses the estimated query volume of OpenAI’s ChatGPT, the reigning champion of the generative AI boom.

As technical architects, we must scrutinize this comparison. It is not merely a contest of numbers but a clash of fundamental information retrieval architectures. Pinterest operates on a Visual Discovery Engine powered by massive-scale graph neural networks (GNNs), whereas ChatGPT relies on Generative Pre-trained Transformer architectures. The latency, compute cost, and user intent behind a "search" on these two platforms are radically different.

Architecture of Intent: Vector Similarity vs. Token Generation

To understand how Pinterest generates such massive query volume compared to an LLM, we must analyze the friction and cost of the interaction layer.

Visual Embeddings and Low-Friction Discovery

Pinterest’s search mechanism is largely non-textual. It utilizes deep learning computer vision models to map images into a high-dimensional embedding space. When a user clicks a pin and scrolls, they are technically executing a "search"—a query for related items based on visual vectors (color, shape, object recognition) and semantic vectors (context, style).

- Interaction Mode: Passive, high-frequency. A single user session can trigger dozens of queries via the "More like this" algorithm without typing a single keyword.

- Technical Stack: Likely relies on highly optimized Approximate Nearest Neighbor (ANN) search algorithms (such as HNSW or quantization-based indexes) enabling millisecond-latency retrieval from a catalog of billions of objects.

- Compute Load: Retrieval is computationally cheaper than generation. Fetching existing vectors is O(log N) complexity, whereas generating tokens is linear to the output length and computationally heavy on GPU memory bandwidth.

Generative Inference: The Cost of Reasoning

Conversely, a "search" on ChatGPT is a high-intent, high-friction event. It requires the user to formulate a prompt (prompt engineering), and the system to execute complex inference.

- Interaction Mode: Active, conversational. Users engage in multi-turn dialogue, but the frequency of distinct "searches" is lower than the rapid-fire visual consumption on Pinterest.

- Technical Stack: Transformer-based LLMs requiring massive parameter weights to be loaded into VRAM.

- Latency & Cost: Inference latency is perceptible. The cost per query is orders of magnitude higher than a vector lookup.

Therefore, while Pinterest wins on volume, ChatGPT likely wins on compute density per interaction. Pinterest aggregates micro-intents; ChatGPT resolves macro-problems.

The Monetization Gap: Why Search Volume Didn’t Save the Stock

If Pinterest processes more searches than the world’s leading AI, why were the earnings disappointing? The answer lies in the conversion capability of the retrieval architecture.

Intent Ambiguity in Visual Search

In technical SEO terms, Pinterest searches are often "top-of-funnel" or informational. A user searching for "rustic living room ideas" is in a state of inspiration, not necessarily transaction. The semantic gap between seeing an image and purchasing a product remains the primary challenge. While Pinterest has integrated "shop the look" using object segmentation masks, the conversion funnel is longer and leakier than a direct textual query.

The Precision of LLM Queries

ChatGPT usage often reflects "bottom-of-funnel" or distinct operational needs (coding, writing, summarizing). While it is difficult to insert ads into a conversational stream without breaking user trust, the utility value is higher. Investors are currently betting that the AGI (Artificial General Intelligence) trajectory of LLMs will eventually capture the entire value chain, whereas Pinterest remains confined to the "inspiration" vertical.

Future Vectors: The Multimodal Convergence

The existential risk for Pinterest—and the reason for the market’s skepticism—is the rise of Multimodal Large Language Models (MLLMs) like GPT-4o and Gemini 1.5 Pro. These models can now "see."

When ChatGPT can process images with the same fidelity as text, it begins to encroach on Pinterest’s moat. If a user can upload a photo of a room to ChatGPT and ask, "Find me furniture that matches this aesthetic and create a shopping list with links," the distinct advantage of Pinterest’s visual graph begins to erode. This represents a shift from Graph-Based Retrieval to Generative Recommendation.

Pinterest must pivot from being a repository of static images to a dynamic engine of personalized curation that an LLM cannot hallucinate. Their proprietary dataset of user-curated boards serves as a "human-in-the-loop" reinforcement learning layer that pure AI models currently lack.

Technical Deep Dive FAQ: Search Architectures

How does Pinterest define a "search" compared to ChatGPT?

Pinterest likely counts both explicit keyword queries and implicit visual queries (clicking on a pin to see related pins) as searches. This creates a high-velocity feedback loop. ChatGPT likely counts distinct prompt submissions. The former is a traversal of a graph; the latter is a generation of a sequence.

What is the role of Vector Databases in this comparison?

Both platforms rely on vector embeddings. Pinterest uses them to find nearest neighbors in image space (Visual Search). ChatGPT uses them for RAG (Retrieval-Augmented Generation) to ground its answers. However, Pinterest’s core product is the vector retrieval, whereas for ChatGPT, it is an auxiliary function to the generative process.

Can Pinterest integrate LLMs to improve revenue?

Yes. By utilizing Vision-Language Models (VLMs), Pinterest can better understand the context of an image, not just its pixel distribution. This allows for hyper-targeted advertising (e.g., recognizing that a "messy room" pin might imply a need for organizational bins, not just furniture) thereby increasing ad relevance and ARPU (Average Revenue Per User).

Is the 80 Billion search metric sustainable?

Sustainability depends on inference cost per query. Pinterest’s retrieval model is highly efficient. However, as they add more AI features (generative backgrounds, canvas tools), their compute costs will rise. If they cannot monetize the traffic at a rate that outpaces the infrastructure costs of maintaining an exabyte-scale visual index, the volume becomes a liability rather than an asset.