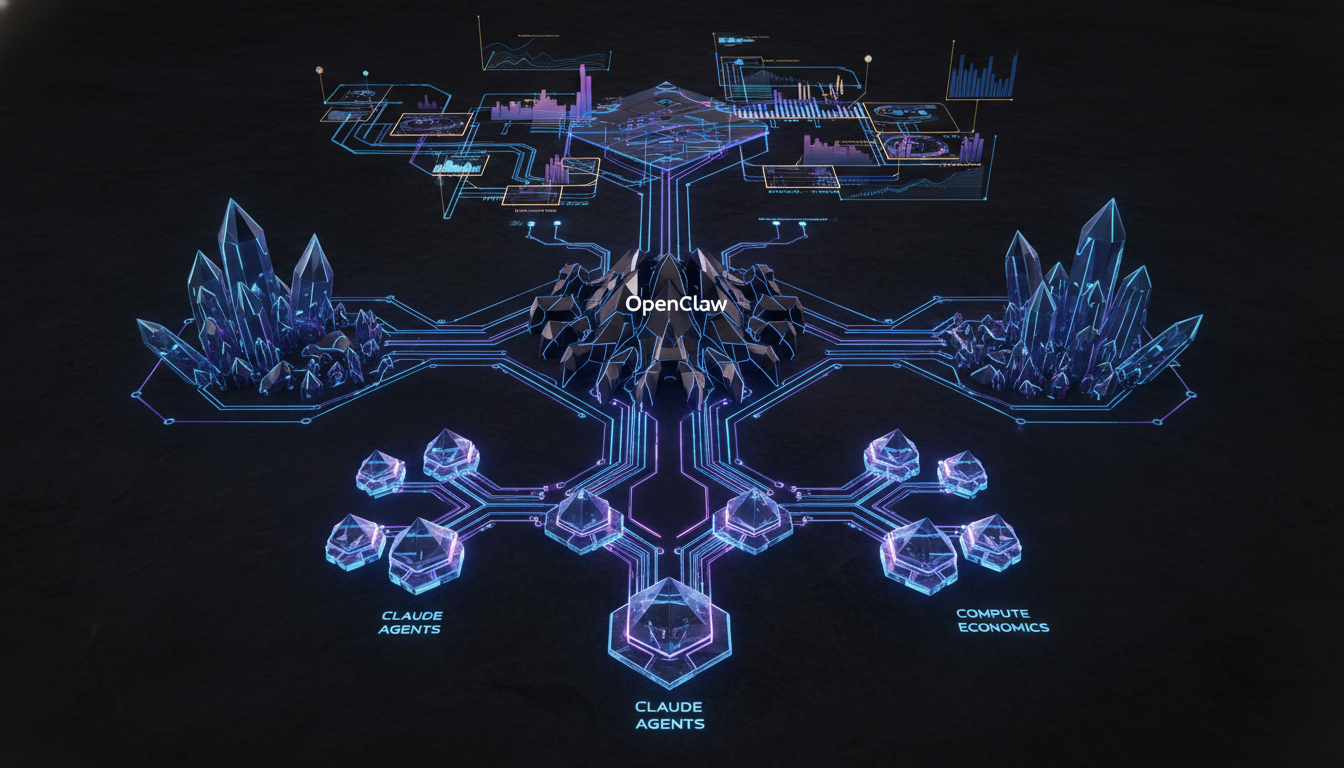

The Paradigm Shift in Agentic AI Economics: Decrypting the New Metrology of Claude and OpenClaw

The era of heavily subsidized agentic frameworks is sunsetting. As a Senior Architect navigating the bleeding edge of AI inference, I view the recent pivot in OpenClaw pricing as more than just a commercial recalibration—it signals a fundamental maturation in the computational logistics of large language models (LLMs). OpenClaw, the highly integrated framework designed to tether OpenAI-like tooling ecosystems with Anthropic’s Claude models, has officially transitioned from a complimentary sandbox abstraction to a rigorously metered computational resource. This architectural shift demands a profound re-evaluation of how we construct, deploy, and optimize autonomous agents in production environments.

For engineering teams reliant on Claude’s sophisticated reasoning capabilities, the introduction of a hard paywall for OpenClaw fundamentally alters the deployment calculus. It is no longer sufficient to merely chain prompts together in a zero-shot or few-shot ReAct (Reasoning and Acting) loop; developers must now account for the steep computational overhead inherent to continuous token generation and multi-step inference latency. In this extensive technical pillar, we will dissect the underlying mechanics of this pricing shift, explore the infrastructural demands that necessitate such a model, and outline advanced mitigation strategies—ranging from Parameter-Efficient Fine-Tuning (PEFT) to hyper-optimized Retrieval-Augmented Generation (RAG) pipelines—to maintain economic viability in your AI architectures.

The Computational Reality Behind OpenClaw Pricing

To understand why the OpenClaw pricing structure has been instituted, one must look directly at the underlying Transformer architecture and the severe infrastructural toll of agentic loops. Unlike standard conversational interfaces where a user inputs a discrete prompt and receives a discrete response, OpenClaw operates as a persistent middleware. It intercepts, translates, and routes tool-use requests—frequently polling APIs, executing local code, and maintaining state across extended sessions.

Inference Latency and the Cost of Continuous Token Generation

Every time an OpenClaw agent invokes a tool, the LLM must generate a highly structured output (typically JSON or XML). This requires a massive number of forward passes through the network. When we discuss inference latency in this context, we are not just talking about Time to First Token (TTFT); we are addressing the cumulative latency of Inter-Token Time (ITT) stretched across hundreds of hidden reasoning steps. The compute cost is directly proportional to the number of active parameters and the length of the sequence. Anthropic’s models, known for their massive parameter counts and dense attention layers, consume immense VRAM and compute cycles. The OpenClaw pricing model is a direct reflection of the GPU hours burned during these iterative loops.

Context Window Saturation and KV Cache Economics

One of the most profound bottlenecks in scaling autonomous agents is the Key-Value (KV) cache. As an OpenClaw session progresses, it accumulates a conversational history, tool outputs, and environmental observations. The Transformer architecture requires storing the key and value tensors for all previous tokens in the sequence to compute attention for the next token. When a context window stretches to 100k or 200k tokens, the KV cache footprint on the GPU memory grows linearly (or quadratically in terms of compute, depending on the attention mechanism). Maintaining this state across API calls is excruciatingly expensive. The new billing models are intrinsically tied to this memory saturation. We are seeing a shift where developers are penalized for bloat, forcing a transition toward more aggressive KV cache eviction policies and state summarization protocols.

Architecting for Economic Resilience: Mitigating API Overhead

With the implementation of the new OpenClaw pricing tiers, brute-force agentic workflows are computationally insolvent. Senior architects must transition from naive prompt engineering to systemic prompt compression and contextual routing. This involves stripping away redundant tokens, leveraging lower-precision data formats where applicable, and implementing intelligent gating mechanisms that dictate when an expensive Claude API call is genuinely necessary.

Advanced RAG Optimization Strategies

Retrieval-Augmented Generation (RAG) optimization is no longer just about improving semantic relevance; it is a critical cost-control lever. In an OpenClaw ecosystem, poorly optimized RAG pipelines that inject massive, uncompressed document chunks into the context window will violently accelerate billing. To circumvent this, architectures must evolve. We must deploy dense embedding models with precise semantic chunking algorithms that isolate only the most pertinent vectors. Furthermore, utilizing re-ranking models (such as Cohere’s Rerank or cross-encoders) before injecting context into the OpenClaw pipeline ensures that the context window remains lean. By maximizing information density per token, we drastically reduce the overhead subject to OpenClaw pricing constraints.

Semantic Routing and Hybrid Inference Models

Not every node in a complex decision tree requires the reasoning capabilities of a frontier model like Claude 3.5 Sonnet or Opus. To master the new economic paradigm, we must implement Semantic Routing. This involves using an ultra-fast, locally hosted model (e.g., Llama 3 8B quantized) or a heavily optimized SLM (Small Language Model) to handle trivial routing tasks, intent classification, and basic tool execution. The OpenClaw pipeline should be reserved strictly for deep reasoning bottlenecks and complex synthesis tasks. By tracking these execution paths using platforms like Weights and Biases (WandB), engineering teams can visualize exactly which nodes are driving up API costs and systematically replace them with localized inference or Parameter-Efficient Fine-Tuning (PEFT) adaptations of smaller models.

The Role of Parameter-Efficient Fine-Tuning (PEFT) and LoRA

As the OpenClaw pricing model constricts operational budgets, many enterprise teams are looking toward self-hosting highly specialized models to offload specific agentic sub-tasks. Low-Rank Adaptation (LoRA) and other PEFT methodologies allow us to take open-weight models and train them on the exact JSON structures and tool-use patterns that OpenClaw normally translates. By injecting these low-rank matrices into the attention heads of a smaller model, we can achieve Claude-level structural compliance for specific APIs at a fraction of the inference cost. This hybrid architecture—where OpenClaw acts as the overarching orchestrator while localized PEFT models handle high-frequency, low-complexity tool calls—represents the gold standard for modern AI infrastructure.

Refactoring the Developer Experience: Monitoring Weights and Biases

Visibility is the antidote to exponential billing. Integrating robust telemetry into your LLM pipelines is mandatory. By hooking OpenClaw executions into monitoring frameworks like Weights and Biases (WandB) or LangSmith, architects can trace the exact token consumption, latency metrics, and API payloads for every step of an agent’s lifecycle. You must track the distribution of token counts across different tools. If a specific database query tool consistently returns unoptimized, heavily padded JSON responses that are then fed back into Claude, the telemetry will flag this anomaly. You can then write a deterministic Python parser to strip whitespace and irrelevant fields from the tool output before it hits the LLM, shaving thousands of tokens off your daily compute bill.

Conclusion: Embracing the Metrology of AI Compute

The transition to a paid OpenClaw model is a forcing function for engineering discipline. It strips away the illusion of infinite compute and grounds AI development in the rigorous reality of distributed systems architecture. By embracing advanced techniques such as KV cache optimization, aggressive RAG pruning, semantic routing, and hybrid localized inference, we can build autonomous systems that are not only infinitely more scalable but economically bulletproof. The future of AI does not belong to those who can brute-force the largest context windows; it belongs to the architects who can extract the maximum cognitive yield per token.

Technical Deep Dive FAQ

What exactly drives the high cost of agentic AI frameworks like OpenClaw?

The primary driver is iterative context window expansion. In an agentic loop, the model must read the original prompt, the history of its thoughts, the outputs of previously invoked tools, and the current state before generating the next action. This continuous reloading and computation of the KV cache across multiple sequential API calls causes token usage to grow exponentially compared to a single-turn query.

How can RAG optimization directly reduce OpenClaw billing?

By implementing aggressive chunking, metadata filtering, and post-retrieval re-ranking, RAG optimization ensures that only the highest-fidelity, most relevant information is injected into the prompt. Reducing a retrieved context payload from 10,000 tokens to a highly dense 1,500 tokens immediately slashes the input token costs for every subsequent step in the OpenClaw reasoning loop.

Is it possible to circumvent the new pricing by using local models entirely?

While trivial tasks can be offloaded to local open-weight models using PEFT and LoRA to match the required tool-use formatting, complex zero-shot reasoning and deep synthesis still heavily favor frontier models like Claude. The optimal architecture is a hybrid one: local models for routing and basic API interaction, and OpenClaw for complex decision-making nodes.

How does inference latency correlate with pricing in this new model?

While pricing is typically calculated per thousand tokens, inference latency is a proxy for compute utilization. Models that take longer to generate responses are occupying VRAM and compute units for extended periods. As Anthropic optimizes its serving infrastructure to reduce latency (such as implementing FlashAttention or speculative decoding), the underlying cost of serving decreases, but the rigid API pricing tiers are designed to guarantee a margin on the worst-case scenario of heavy tool-use iteration.

This technical analysis was developed by our editorial intelligence unit, leveraging insights from the original briefing found at this primary resource.