Game Arena Architecture: Deconstructing Google DeepMind’s Shift to Agentic Benchmarking

Executive Synthesis: The era of static LLM evaluation is effectively over. As models saturate traditional benchmarks like MMLU and GSM8K, Google DeepMind’s open-sourcing of Game Arena via Kaggle signals a pivotal transition toward dynamic, multi-agent reinforcement learning (MARL) environments. This analysis dissects the technical implications of using adversarial gaming substrates to evaluate reasoning, planning, and Theory of Mind in next-generation AI agents.

The Obsolescence of Static Evaluation: Why We Needed Game Arena

For the past three years, the AI research community has relied heavily on static datasets—snapshot evaluations that measure a model’s ability to retrieve information or solve isolated logic puzzles. While effective for initial large language model (LLM) scaling, these benchmarks suffer from data contamination and rote memorization. They fail to capture the agentic capabilities required for real-world deployment: long-horizon planning, adversarial adaptation, and negotiation.

Google DeepMind’s Game Arena creates a standardized, reproducible framework that moves evaluation from text completion to state-space navigation. By leveraging the Kaggle platform, DeepMind has effectively Dockerized the evaluation process, allowing researchers to pit agents against one another in environments defined by stochasticity and partial observability. This is not merely “games for AI”; it is a rigorous stress test for the generalization capabilities of Transformer-based architectures when forced to interact with uncooperative dynamic entities.

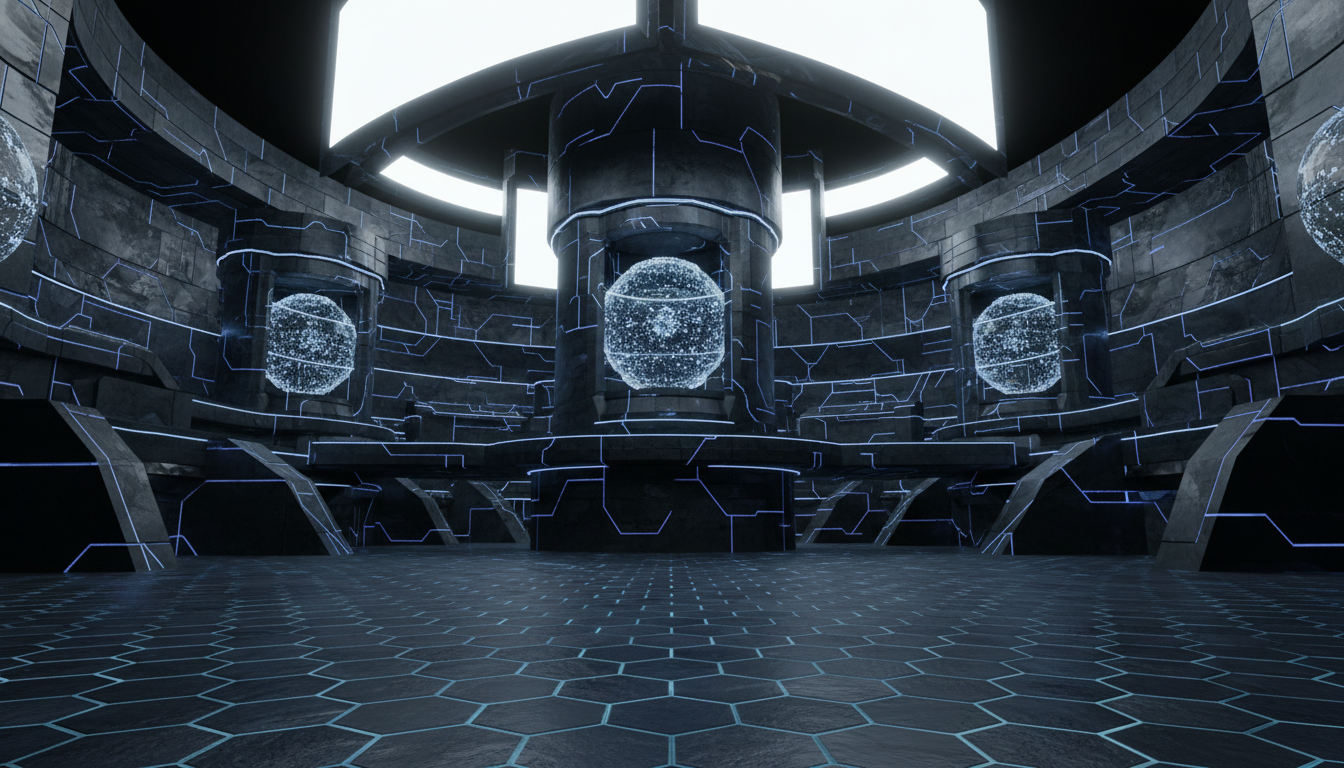

Architectural Deep Dive: The Game Arena Environment

Decoupling Simulation from Inference

At the core of the Game Arena infrastructure is a necessary decoupling of the environment simulation loop from the agent’s inference cycle. In traditional RL setups (like OpenAI Gym), the coupling is often tight. However, for scalable evaluation of massive parameters models (70B+), the architecture must account for inference latency without halting the simulation logic.

The Game Arena implementation on Kaggle utilizes a modular containerization approach. Agents operate within isolated sandboxes, communicating actions via strictly typed APIs. This design choice mirrors microservices architecture, ensuring that a crash or hallucination in one agent does not destabilize the environment kernel. This robustness is critical when evaluating non-deterministic models that may output invalid action tokens.

From Perfect Information to Partial Observability

One of the most significant technical leaps in Game Arena is the prioritization of games involving hidden information (e.g., Poker, Stratego-style mechanics). In Chess or Go (perfect information games), the entire state space is visible, allowing for pure Monte Carlo Tree Search (MCTS) optimization. However, real-world deployment of AI requires navigating Partially Observable Markov Decision Processes (POMDPs).

The Game Arena environments force the AI to maintain an internal “world model”—a probability distribution of the opponent’s hidden state. Technically, this evaluates the model’s capacity for Bayesian inference over time. An agent cannot simply calculate the best move based on the board; it must infer the intent and hidden resources of the adversary based on historical action sequences.

Linguistic Interaction and Negotiation Protocols

Unlike purely distinct RL environments (like MuJoCo), Game Arena integrates linguistic negotiation into the gameplay loop. This is where Large Language Models shine compared to traditional Deep Q-Networks (DQN). The environment allows for—and evaluates—natural language diplomacy.

The “Diplomacy” Problem

In multi-agent scenarios, the optimal strategy often involves coalition building. The architecture evaluates the model’s ability to:

- Detect Deception: Analyzing semantic inconsistencies in an opponent’s dialogue.

- Formulate Binding Contracts: Generating text that commits to a future state (and adhering to it).

- Strategic Betrayal: Calculating the precise utility function crossover point where breaking an alliance yields higher expected value (EV) than maintaining it.

This moves benchmarking into the realm of social intelligence evaluation, a frontier previously untestable in static code or math benchmarks.

Scoring Beyond Accuracy: ELO and Nash Equilibrium

Traditional accuracy metrics (pass/fail) are insufficient for adversarial environments. Game Arena adopts an ELO rating system, similar to competitive chess, but adapted for heterogeneous agent populations. This provides a relative skill metric that evolves dynamically.

Transitivity and Cyclic Dominance

A critical technical challenge in this benchmarking approach is non-transitivity (e.g., Rock-Paper-Scissors scenarios where Strategy A beats B, B beats C, but C beats A). A single scalar ELO score often fails to capture these dynamics. The Game Arena framework likely employs multi-dimensional skill vectors to map the Pareto frontier of agent capabilities. High-performing agents are those that approximate a Nash Equilibrium, where no unilateral deviation in strategy can improve their outcome against a set of optimal opponents.

Technical Implications for Fine-Tuning (RLEF vs. RLHF)

The existence of Game Arena accelerates the shift from Reinforcement Learning from Human Feedback (RLHF) to Reinforcement Learning from Environmental Feedback (RLEF). Human labeling is expensive, slow, and prone to bias. Game environments provide an infinite stream of ground-truth feedback signals (win/loss/resource gain).

Engineers can now set up self-play loops where models fine-tune against copies of themselves or diversified adversarial zoos. This Autocurricula—where the environment automatically generates increasingly difficult scenarios as the agent improves—is the mechanism that will likely drive the next jump in reasoning capabilities, moving us closer to System 2 thinking in AI.

Technical Deep Dive FAQ

How does Game Arena handle the stochastic nature of LLM outputs?

The framework employs strict action-space parsing. The LLM outputs text/tokens, which a middleware wrapper attempts to parse into valid game moves. If the parsing fails (e.g., the model hallucinates an invalid move), the environment registers a ‘technical foul’ or a no-op, often penalizing the agent. This forces models to learn strict adherence to syntax constraints alongside strategy.

Can this framework evaluate multimodal models?

While the current iteration focuses heavily on logic and text-based interaction, the underlying architecture supports arbitrary state observations. Future implementations could feed visual frames (pixel data) directly to the agent, requiring Vision-Language Models (VLMs) to interpret the board state visually rather than via JSON text descriptions.

What is the computational overhead compared to MMLU?

Significantly higher. MMLU requires a single forward pass per question. Game Arena requires multi-turn episodes, often lasting hundreds of steps. This increases the inference cost linearly with game length. However, the density of the signal (evaluating planning, consistency, and adaptation) provides a much higher “bits per token” evaluation value.

Does this replace standard benchmarks?

No, it augments them. Standard benchmarks are unit tests for knowledge retrieval and basic logic. Game Arena serves as the integration test for agentic behavior. A model can ace the bar exam (static knowledge) but fail at Poker (dynamic reasoning); both metrics are required for a holistic view of intelligence.