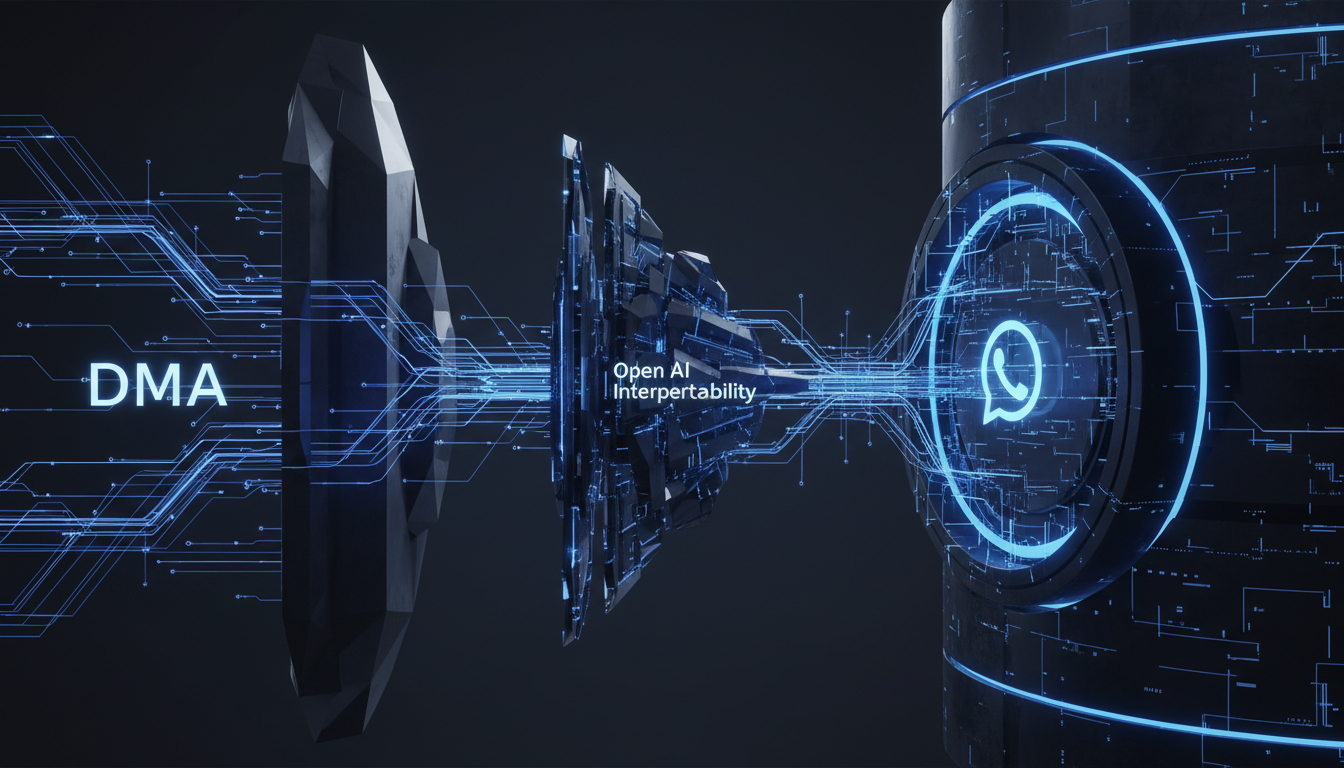

DMA vs. The Walled Garden: Architecting Open AI Interoperability Within the WhatsApp Ecosystem

The European Commission’s recent warning to Meta represents a fundamental shift in the architectural topology of consumer messaging apps. We analyze the technical implications of forcing interoperability on the world’s largest encrypted messaging platform, examining the friction between E2EE protocols, inference latency, and the democratization of AI agent deployment.

The Intersection of Antitrust and API Architecture

The digital landscape is currently witnessing a collision between legislative intent and software architecture. The European Union’s Digital Markets Act (DMA) has identified Meta not merely as a social platform, but as a digital gatekeeper. The specific contention—that Meta must not deprecate or block rival AI assistants from functioning within WhatsApp—forces a re-evaluation of how conversational AI is orchestrated within proprietary environments.

From a systems architecture perspective, WhatsApp has traditionally functioned as a closed loop. The client-server handshake relies on the Signal Protocol for End-to-End Encryption (E2EE). Introducing third-party AI agents, such as those powered by OpenAI’s GPT-4 or Google’s Gemini, into this environment necessitates a radical redesign of the API gateway. It moves us from a purely peer-to-peer (P2P) messaging paradigm to a Model-as-a-Service (MaaS) integration layer where WhatsApp acts less like a chat app and more like a neutral UI shell for diverse inference engines.

The Gatekeeper Paradox: Inference Control vs. Open Access

Meta’s strategic pivot toward LLaMA 3 and its integration into the Meta AI ecosystem is a masterclass in vertical integration. By owning the model weights, the serving infrastructure, and the user interface (WhatsApp), Meta can optimize for token throughput and minimize latency. However, the EU’s stance challenges this vertical stack. If Meta is forced to allow rival bots, we are looking at a scenario where the application layer must decouple from the inference layer.

The Latency Challenge in Cross-Provider Inference

Technically, integrating a third-party bot involves complex webhooks and callback structures. When a user queries a rival bot inside WhatsApp, the payload must traverse:

- The WhatsApp client (User Input).

- Meta’s edge servers (Routing).

- The Third-Party API Endpoint (e.g., Anthropic or Mistral).

- The Inference Step (Token Generation).

- The Return Path (Streaming tokens back to the UI).

For native Meta AI, this path is optimized on internal clusters using custom silicon (MTIA). For rival bots, network hops introduce latency. A critical architectural concern is whether Meta will provide a “fast lane” for its own models while throttling external API calls under the guise of security checks or rate limits—a classic antitrust technical battleground.

Interoperability Standards and E2EE Friction

The most profound technical hurdle lies in reconciling open AI access with the Signal Protocol. Currently, E2EE ensures that only the sender and recipient possess the decryption keys. An AI agent, by definition, requires access to the cleartext of the conversation to perform inference and generate a response (RAG – Retrieval Augmented Generation).

Client-Side vs. Server-Side Decryption

To comply with the EU without breaking privacy promises, architectures may need to shift toward client-side inference coordination or homomorphic encryption, though the latter remains computationally prohibitive for real-time chat. The more likely solution is a strictly defined permission scope where the user explicitly grants a third-party bot ephemeral access to specific message threads. This requires a granular permissioning system within the WhatsApp API that goes beyond simple OAuth flows, ensuring that the “Context Window” provided to the external LLM is sanitized and limited to the immediate interaction.

The Rise of the Multi-Model Orchestration Layer

If the EU prevails, WhatsApp could inadvertently become the world’s largest multi-model orchestration layer. Instead of a monolithic application, it becomes a canvas for Agentic Workflows.

Parameter-Efficient Fine-Tuning (PEFT) and Vendor Diversity

This openness would allow specialized AI vendors to deploy highly fine-tuned models for specific verticals (e.g., a Med-PaLM bot for medical advice or a BloombergGPT integration for finance) directly within the WhatsApp interface. For the technical architect, this suggests a future where the “Super App” is defined not by the features built by the host, but by the diversity of the models it can route to. It shifts the value proposition from the social graph to the “intelligence graph.”

Implications for RAG Optimization and Context Windows

Third-party developers looking to deploy on this potentially open WhatsApp ecosystem must prioritize RAG optimization. Unlike a web interface where the developer controls the DOM, a WhatsApp bot has a limited UI surface area. The efficiency of the vector search and the relevance of the retrieved chunks become paramount because the output must be concise. Furthermore, managing the context window across a stateless API connection routed through a competitor’s infrastructure (Meta) presents unique challenges in maintaining conversational continuity without bloating token costs.

Strategic Outlook: The Meta-Llama Defense

Meta’s likely defense against this commoditization is the open-sourcing of LLaMA. By making their own models the industry standard for open weights, they may technically comply with interoperability while ensuring that “rival” bots are still essentially running on Meta’s architectural philosophy. However, the EU’s warning specifically targets the application layer blocking, meaning distinct entities like ChatGPT or Claude must be treated with protocol neutrality.

Technical Deep Dive FAQ

How does API latency impact the User Experience of rival bots on WhatsApp?

Latency is the primary degradation factor. Native integration allows for optimized WebSocket connections and pre-caching. External APIs rely on RESTful webhooks which introduce round-trip time (RTT). To mitigate this, third-party architectures must utilize aggressive edge caching and token streaming protocols compatible with WhatsApp’s message delivery standards.

Can End-to-End Encryption (E2EE) be maintained with third-party AI?

Yes, but with caveats. The encryption tunnel must terminate at the AI agent’s server, or the user’s device must perform local inference (unlikely for large models). The architecture effectively treats the AI agent as a “third participant” in the chat, necessitating a new key exchange protocol where the bot holds a private key.

What are the risks of Prompt Injection in this ecosystem?

Opening WhatsApp to external agents increases the attack surface. Malicious prompt injection could theoretically be used to exfiltrate user data from the context window to external logs. Meta will likely implement a sanitization layer, which itself could be a point of contention if it is perceived as filtering rival bot capabilities.

How does this affect Vector Database integration?

For external bots using RAG, the vector database (e.g., Pinecone, Milvus) sits outside Meta’s infrastructure. The challenge is ensuring secure, authenticated queries from the WhatsApp user context to the external vector store without leaking metadata to the gatekeeper (Meta).